An Intellyx BrainBlog by Jason English | Part 3 of the Auguria Data Experience Series

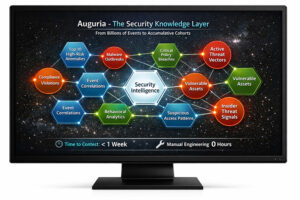

In our previous chapter, my colleague Eric Newcomer described how intelligent data handling and GenAI form a security knowledge layer (SKL) to help SOC teams deal with the cognitive load of processing and analyzing immense streams of system and event data for significant anomalies and alerts.

In our previous chapter, my colleague Eric Newcomer described how intelligent data handling and GenAI form a security knowledge layer (SKL) to help SOC teams deal with the cognitive load of processing and analyzing immense streams of system and event data for significant anomalies and alerts.

While some manual data engineering processes have been transformed into automation and AI workloads, we’ve all been in the business of ‘data wrangling’ for quite a while in order to make so much data meet its intended purpose. For most companies, that means ingesting, normalizing, and tagging data, then doing investigations from there.

Stay with me here as I digress a bit. For the last couple of decades, enterprises have used session data to drive the intelligence behind personalization and targeting in e-commerce and social media. Web empires were founded on instantly recommending the next product or media you might be interested in, and on reading reviews and comments from people like you, so you would pay attention or purchase something.

Behind the scenes, marketing teams were looking at user clickstreams and account session data to group you into cohorts of other demographically like-minded individuals who might buy certain categories of products. You know – single dog owners in London, or truck-driving moms in Texas. Leading web properties spent millions of hours of data engineering labor to put such responsive algorithms in place.

What if security and operations data could be personalized to your most critical concerns about risk and cost in a similar way, without so much effort? Wouldn’t that separate the most interesting signals from the rest of the uninteresting noise?

The trick isn’t data processing, it’s data understanding

Software and database vendors have come up with novel approaches for bringing in security and observability data at higher rates, for instance, by reordering ETL (extract, transfer, and load) processes with ELT (extract, load, then transfer). Or, by dumping everything into a “warm” data lake so it is readily searchable. Or, reducing the volume somewhat by filtering or sampling data as it comes in, as I discussed in the first chapter of this series.

Whatever processing trick is applied to the data, an analyst in the SOC would have a hard time knowing if this incoming data is ready to use without deeply understanding the architecture of the data source and its eventual destination. Such verification would usually require an expert team of data engineers working into the wee hours to normalize the data so that it is relevant for the enterprise’s specific environment.

The knowledge required for that normalization would be partially surfaced by an SKL, of course, but the volume of data at the top of the funnel is too great for humans to make manual comparisons on the fly, and the variability (or cardinality) of data is too high to reliably automate normalization.

Before you can start looking at alerts and detecting anomalies, you need to understand what data you need, and what data you don’t need – a problem that isn’t addressed by existing data processing, ingestion, and data transfer tools.

Understanding the real chokepoint of data engineering

There are a lot of really slick-looking commercial security and observability dashboards these days. Lots of them were built on top of open-source Grafana visualizations. That’s great news if you’re a practitioner, but if you are a software vendor, it drains another competitive moat.

A cool screen is no longer a big deal if anyone can build their own dashboard with a free tool and a little effort. The only way to differentiate your data visualization is by reliably improving the value of the data itself for the business, by improving the user’s understanding of the data they are looking at…

— Read the entire BrainBlog article on the Auguria site at: https://hubs.la/Q043CNJN0.